Estimating hospitals’ all-payer volume of cancer surgeries from Fee-for-Service Medicare claims

Introduction

Prior research has established a relationship between volume and outcomes in certain surgical procedures for cancer, prompting both government and private organizations to endorse publicly reporting hospital surgical volume (1-5). Volume information is an objective measure that could be used by patients, providers, payers and policymakers to inform the choice of hospital for cancer surgery, policy initiatives, and quality improvement efforts in the absence of hospital-level outcomes data (6). In addition, provider volume is one of the many metrics that cancer patients are interested in when choosing a provider for their cancer care (7).

Existing administrative claims can be leveraged to identify cancer surgery volume. However, the current landscape of reporting cancer surgery volume is limited and inconsistent. While several organizations have released select information on hospitals’ cancer surgical volume, there is insufficient data available to patients and researchers. For example, public reports generated by US News and World Report display a volume range for lung and colorectal cancer surgeries, but only for patients with Medicare coverage and for hospitals that meet a volume threshold (8,9). While the Leapfrog group publishes hospital volume data for lung, pancreatic, esophageal and rectal cancer surgeries, it is self-reported voluntarily by the hospital (10). Government commissioned public reporting of all-payer cancer surgery volume is minimal, and only certain states, such as California, have pursued such an initiative (11). At the provider-level, specific hospital systems like Johns Hopkins University Hospitals have also made efforts to report volume for certain surgeries such as esophagus, lung, pancreas and rectal resections (12). Despite these efforts there is still no comprehensive resource available that reports the number of cancer surgeries performed at each hospital nationally.

The FFS Medicare claims administrative data source offers a potential foundation from which to create a national resource of hospital cancer surgical volume. Since FFS Medicare claims represent a subset of all patients undergoing cancer surgery, we first explored the extent to which hospitals’ FFS Medicare volume is representative of all-payer volume. Second, we determined if FFS Medicare claims, in conjunction with publicly available data sources, could be used to reliably predict the all-payer volume of cancer surgeries performed.

Methods

Data sources

To calculate all-payer surgical volume of a subset of hospitals, we obtained State Inpatient Discharge (SID) datasets for Arizona [2012–2013], California [2011], Maryland [2011–2013], Washington [2011–2012] and Wisconsin [2012–2013] from the Healthcare Cost and Utilization Project (HCUP) and the Hospital Inpatient Data file for Florida [2012–2013] from Agency for Health Care Administration for the State of Florida (AHCA) (13,14). States were chosen for their geographic diversity, range of state-level Medicare Advantage penetration proportions and availability of a hospital identifier for data linkage.

To obtain a subset of all-payer volume, we used FFS inpatient claims [2011–2013] from the Centers for Medicare and Medicare Services (CMS) to calculate FFS Medicare surgical volume by hospital. We chose the FFS Medicare dataset because it includes all hospitals treating patients covered under the FFS Medicare program, which is the single largest payer for healthcare in the United States. We used the American Hospital Association (AHA) Annual Hospital Survey Database [2012] to link providers between the all-payer data and the FFS Medicare data (15).

The National Inpatient Sample (NIS; 2012), a 20-percent stratified sample of all discharges from U.S. community hospitals, was used to obtain the national proportion of patients who are aged 65 and older receiving cancer surgery (16). Medicare Advantage (MA) county-level penetration files [2011–2013] were used to calculate the proportion of eligible beneficiaries who are enrolled in Medicare Advantage in a hospital’s county (17).

Study sample

We included all hospitals that performed at least one cancer-directed surgery based on the all-payer discharge data using a previously validated approach (18). The cancer types included are: bones & joints, breast, colorectal, gynecological, gastroesophageal, kidney, liver, lung/pleura/bronchus, ovarian, pancreas, prostate, and sarcoma; cancer sites were analyzed in aggregate. We calculated the inpatient surgical volume for each hospital and year of data available. Therefore, the unit of analysis was the hospital-year, i.e. the same hospital over three years counted as three separate observations. We excluded hospitals that we could not link across data sources (N=680). Kaiser Permanente hospitals were also excluded because they are a part of an integrated delivery network and their volume could not be accurately captured in our FFS Medicare data (N=15).

Statistical analyses

Objective 1: to assess whether hospitals’ FFS Medicare volume is representative of all-payer volume

We first visually inspected the relationship between FFS Medicare surgical volume and all-payer volume using scatterplots of the metrics of volume and volume rank. We ordered hospitals from low to high volume and assigned a continuous rank to each hospital based on FFS Medicare and all-payer volume, separately. Ties were resolved by using the average rank. In our analyses, a higher rank meant higher volume. Next, we calculated the Spearman correlation for FFS Medicare volume and all-payer volume. Third, we grouped the hospitals into quartiles separately for FFS Medicare and all-payer volume and evaluated the proportion of hospitals whose FFS Medicare volume was in a different quartile than their all-payer volume. We examined this overall and stratified by the all-payer volume of the hospital (low volume: ≤20 and high volume: >20 surgeries per hospital-year) to determine whether there was a difference in representativeness between low and high-volume hospitals.

Objective 2: to evaluate if hospital all-payer volume can be accurately predicted using FFS Medicare volume

We randomly split hospital-years into a development set (70%) and test set (30%). We first used the development set to build a model to predict all-payer surgical volume for patients 65 and older from FFS Medicare volume. The predictive variables were FFS Medicare volume, modeled as a linear spline with three knots, and county-level MA penetration (%). The model parameters were then applied to the test set to obtain predicted estimates of the number of cancer surgeries performed for patients 65 and older. Next, we inflated the predicted estimates by the national proportion of patients aged 65 and older undergoing cancer surgery to obtain predicted all-payer, all-ages hospital volume.

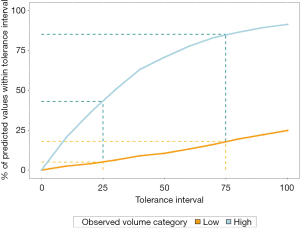

We evaluated the concordance of the predicted and observed all-payer hospital volume by examining the correlation and absolute difference of the estimates. In addition, after calculating the absolute percent difference we explored the relative difference by plotting the tolerance curves stratified by low and high volume. The x-axis of the tolerance curve shows the absolute percent difference between the predicted and observed volume (“tolerance”) and the y-axis shows the percent of hospitals whose difference falls within that percentage. A higher percentage of observed estimates falling within a smaller tolerance interval indicates better accuracy.

Analyses were conducted in Stata version 15 (StataCorp LLC, College Station, TX) and R software version 3.5.1. (R Foundation for Statistical Computing, Vienna, Austria). Memorial Sloan Kettering Cancer Center’s institutional review board determined the study to be exempt under IRB Protocol X16-039 because the data sources used are publicly available.

Results

There were 162,812 surgeries for 1,263 hospital-years, between 2011 and 2013 (Table 1). Approximately 25% of hospitals’ total surgical volume were for patients with FFS Medicare coverage. Our cohort included 314 hospitals meeting the criteria for low-volume in a given year and 949 hospitals meeting the criteria for high volume in a given year (hospitals can be considered low volume in one year and high volume in another year).

Table 1

| Cancer site | All-payer volume | Fee-for-service Medicare volume (% of all-payer volume) |

|---|---|---|

| Bones & Joints | 1,089 | 147 (13.5) |

| Breast | 22,895 | 4,635 (20.2) |

| Colorectal | 42,627 | 13,267 (31.1) |

| Gynecological | 4,953 | 1,289 (26.0) |

| Gastroesophageal | 13,568 | 2,747 (20.2) |

| Kidney | 14,891 | 4,063 (27.3) |

| Liver | 3,352 | 575 (17.2) |

| Lung/pleura/bronchus | 16,750 | 5,615 (33.5) |

| Ovarian | 7,361 | 1,581 (21.5) |

| Pancreas | 3,665 | 1,202 (32.8) |

| Prostate | 29,786 | 5,292 (17.8) |

| Sarcoma | 1,875 | 387 (20.6) |

| All cancer sites combined | 162,812 | 40,923 (25.1) |

Objective 1: to assess whether hospitals’ FFS Medicare volume is representative of all-payer volume

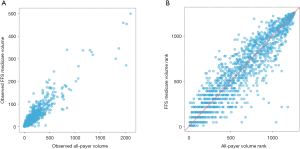

Figure 1 displays the scatterplots of FFS Medicare and all-payer volume and volume rank for individual hospital-years. As shown in Figure 1A, hospitals that have higher FFS Medicare volume tended to have a higher all-payer volume. Similarly, in Figure 1B, hospitals that are ranked higher in FFS Medicare volume are generally also ranked higher in all-payer volume. However, hospitals with lower FFS Medicare volume rank tend to have a wider range of all-payer volume rank. There was a positive association between hospitals’ FFS Medicare and all-payer volume (Spearman correlation r=0.92).

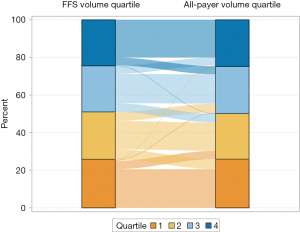

We first classified hospitals into quartiles based on FFS Medicare and all-payer volume. Figure 2 shows the flow of hospitals from their FFS Medicare quartile (left bar) to their all-payer volume quartile (right bar) to visualize whether hospitals were consistently classified. The width of the flow represents the proportion of hospitals moving between the same or different quartiles. Although we see some movement from FFS Medicare quartile to a different all-payer quartile, the majority of hospitals remained in the same quartile (71.2%). Of hospitals that moved, 95.3% shifted by only one quartile. Among hospitals with high all-payer volume, most hospitals’ FFS Medicare volume and all-payer volume were in the same quartile (68.1%) or differed by one quartile (30.1%). Similarly, among hospitals with low all-payer volume, hospitals’ FFS Medicare volume and all-payer volume were either in the same quartile (80.6%) or only differed by one quartile (19.4%). Overall, while results differ minimally between low and high-volume hospitals, a greater proportion of low volume hospitals did not move quartile ranks. Last, no hospitals that were grouped in the top quartile of FFS Medicare volume moved to the lowest quartile of all-payer volume. Further, less than 1% of hospitals moved from the bottom quartile based on FFS Medicare volume to the top quartile of all-payer volume.

Objective 2: to evaluate if hospital all-payer volume can be accurately predicted using FFS Medicare volume

There were 871 hospital-years (69.0%) in the development set and 392 hospital-years (31.0%) in the test set. The county-level MA penetration ranged from 1% to 60% (MA county-level penetration files). The estimated percent of patients aged 65 and older receiving any of the 12 cancer-directed surgeries was 49% (NIS 2012).

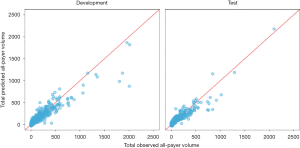

In general, the predicted results differed minimally between the test and development sets. The correlation was high between the predicted and observed all-payer volume (Figure 3; r=0.92 for development set, r=0.92 for test set). Although the correlation was high, the average difference between the predicted and observed volume was 41 surgeries (standard deviation 69.6) in the development set, where the average observed volume was 128 surgeries (standard deviation 210.6). Similarly, the average difference was 43 surgeries (standard deviation 61.0) in the test set, where the average observed volume was 130 surgeries (standard deviation 201.8). Results by each cancer site individually are presented in Table 2.

Table 2

| Cancer site | Development | Test | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Mean | SD | Q1 | Median | Q3 | Mean | SD | Q1 | Median | Q3 | ||

| Bones & Joints | 3.7 | 6.15 | 0.2 | 1.0 | 4.8 | 3.3 | 5.94 | 0.2 | 1.1 | 2.8 | |

| Breast | 10.5 | 17.22 | 1.9 | 4.7 | 11.6 | 12.3 | 20.83 | 2.5 | 5.2 | 12.1 | |

| Colorectal | 20.5 | 18.43 | 7.0 | 14.9 | 29.8 | 20.8 | 20.44 | 7.4 | 15.2 | 30.2 | |

| Gynecological | 3.6 | 4.33 | 1.3 | 2.5 | 4.3 | 3.3 | 4.83 | 1.0 | 2.2 | 3.9 | |

| Gastroesophageal | 7.4 | 13.14 | 1.2 | 2.7 | 6.6 | 7.7 | 12.11 | 1.4 | 3.1 | 7.9 | |

| Kidney | 6.3 | 7.96 | 1.8 | 3.8 | 7.1 | 6.5 | 10.83 | 1.6 | 2.8 | 6.7 | |

| Liver | 4.2 | 8.40 | 0.8 | 1.3 | 3.6 | 5.2 | 8.70 | 0.6 | 1.8 | 5.1 | |

| Lung/pleura/bronchus | 20.6 | 24.70 | 5.7 | 12.2 | 26.3 | 22.7 | 25.09 | 6.3 | 13.6 | 30.9 | |

| Ovarian | 5.2 | 7.18 | 0.9 | 2.4 | 5.8 | 5.0 | 7.08 | 0.9 | 2.2 | 5.3 | |

| Pancreas | 4.7 | 7.04 | 1.3 | 2.3 | 4.7 | 4.2 | 5.54 | 1.3 | 2.6 | 4.3 | |

| Prostate | 16.2 | 46.37 | 3.0 | 7.0 | 14.2 | 18.8 | 41.04 | 2.8 | 7.7 | 16.6 | |

| Sarcoma | 2.6 | 5.93 | 0.2 | 0.8 | 1.7 | 3.2 | 6.00 | 0.2 | 1.1 | 2.8 | |

| All cancer sites combined | 41.4 | 69.59 | 12.2 | 24.0 | 44.7 | 43.3 | 61.02 | 12.7 | 24.2 | 45.9 | |

Figure 4 displays the tolerance curves stratified by high and low volume. The tolerance curve shows the percent of a hospital’s predicted volume estimates that fall within a given interval of the hospital’s observed volume. For example, 43% of predicted values for high volume and 5% of predicted values for low volume hospitals were within 25% of their observed volumes; 85% and 18% of predicted values were within 75% of observed volumes for high and low volume hospitals, respectively, as identified by the drop lines in Figure 4. A larger percentage of hospitals’ predicted all-payer volume was within the specified tolerance for high volume hospitals than for low volume hospitals. Thus, we found that the accuracy of the model was better for high volume hospitals.

Discussion

We examined the extent to which hospitals’ all-payer cancer surgical volume could be classified and predicted based on FFS Medicare claims. We found that FFS Medicare volume is generally representative of all-payer volume as demonstrated by the high, positive correlation observed. When hospitals were categorized by quartiles, fewer than 30% of all hospitals were in different FFS Medicare and all-payer quartiles. Therefore, if we were to approximate a hospital’s volume based on FFS Medicare volume, the quartile classification would be consistent with the all-payer quartile classification for the majority of hospitals. This is especially true for hospitals in the highest and lowest FFS volume quartiles, which are typically the targets for most quality improvement initiatives. Careful consideration must be given to using this approach to drive incentives on a more granular scale than quartile volume classification in order to avoid inappropriately penalizing or rewarding hospitals.

There are some limitations to consider when interpreting the results. Because we only used hospitals from six states, generalizability across the U.S. is unknown. However, given the geographic and demographic variability of the states studied, we hypothesize that the results presented are nationally representative. There could also be a mismatch of the linkage of some hospitals across FFS Medicare and all-payer datasets because we used a crosswalk of hospital identifiers from the middle year [2012]. However, given that our data are at most one year from 2012, we suspect minimal changes over this time.

In the absence of the mandatory reporting of surgical volume by hospitals, approximation methods from publicly available resources are the next best option. Currently, the onus remains on both providers and policymakers to make cancer volume information public (19). Organizations in California have spearheaded these efforts by sharing methods on how to use administrative or discharge data to report volume, with hopes that other states would follow suit (20). In 2018, the Pennsylvania Health Care Cost Containment Council reported on the number of cancer-related surgeries performed at Pennsylvania hospitals based on California’s report (21-23). Proponents of reporting cancer volume argue that at the very least patients deserve to have access to hospital volume information to inform decision-making (24). And while patients are interested in volume, it does not serve as a replacement for surgical outcomes data, but rather can be used as additional information to inform decision-making or quality improvement efforts (7). Information on hospital cancer surgery volume could provide more comprehensive data to guide existing policy-relevant research, including studies on the centralization of surgery, the volume-outcome relationship, and minimum surgical case-load requirements. Further, investigation into an improved model to predict volume by cancer site could be beneficial, given patients’ interest in cancer-specific surgical volumes.

Conclusions

Our study found that FFS Medicare volume is generally indicative of all-payer volume, especially when hospital volume is classified into quartiles. However, all-payer volume cannot be highly accurately estimated at the hospital-level from publicly available data. Future research can examine alternative approaches to potentially improve prediction of all-payer cancer surgical volume, especially for low-volume hospitals.

Acknowledgments

Funding: P30 CA 008748 from the National Institutes of Health Core to Memorial Sloan Kettering Cancer Center (All authors).

Footnote

Conflicts of Interest: All authors have completed the ICMJE uniform disclosure form (available at http://dx.doi.org/10.21037/jhmhp.2020.01.02). DGL reports grants from NIH Core Grant P30 CA 008748, during the conduct of the study; and Diane reports current employment with GoodRx, Inc. JAL reports grants from NIH Core Grant P30 CA 008748, during the conduct of the study. KSP reports grants from NIH Core Grant P30 CA 008748, during the conduct of the study. PBB reports personal fees and non-financial support from American Society for Health-System Pharmacists, personal fees and non-financial support from Gilead Pharmaceuticals, personal fees from WebMD, personal fees from Goldman Sachs, personal fees from Defined Health, personal fees and non-financial support from Vizient, personal fees and nonfinancial support from Hematology Oncology Pharmacy Assoc, personal fees from JMP Securities, personal fees from Mercer, personal fees and non-financial support from United Rheumatology, personal fees from Foundation Medicine, personal fees from Grail, personal fees from Morgan Stanley, personal fees from NYS Rheumatology Society, personal fees and non-financial support from Op-penheimer & Co, personal fees from Cello Health, personal fees and non-financial support from Oncology Analytics, personal fees from Anthem, personal fees from Magellan Health, personal fees and nonfinancial support from Kaiser Permanente Institute for Health Policy, personal fees and non-financial support from Congressional Budget Office, personal fees and non-financial support from America’s Health Insurance Plans, grants from Kaiser Permanente, grants from Arnold Ventures, personal fees and non-financial support from Geisinger, personal fees from EQRx, outside the submitted work. ALS reports grants from NIH Core Grant P30 CA 008748, during the conduct of the study. KSP serves as an unpaid editorial board member of Journal of Hospital Management and Health Policy from Sep. 2019 to Aug. 2021. The other authors have no conflicts of interest to declare.

Ethical Statement: The authors are accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

Open Access Statement: This is an Open Access article distributed in accordance with the Creative Commons Attribution-NonCommercial-NoDerivs 4.0 International License (CC BY-NC-ND 4.0), which permits the non-commercial replication and distribution of the article with the strict proviso that no changes or edits are made and the original work is properly cited (including links to both the formal publication through the relevant DOI and the license). See: https://creativecommons.org/licenses/by-nc-nd/4.0/.

References

- Bach PB, Cramer LD, Schrag D, et al. The influence of hospital volume on survival after resection for lung cancer. N Engl J Med 2001;345:181-8. [Crossref] [PubMed]

- Begg CB, Cramer LD, Hoskins WJ, et al. Impact of hospital volume on operative mortality for major cancer surgery. JAMA 1998;280:1747-51. [Crossref] [PubMed]

- Birkmeyer JD, Siewers AE, Finlayson EV, et al. Hospital volume and surgical mortality in the United States. N Engl J Med 2002;346:1128-37. [Crossref] [PubMed]

- Birkmeyer JD, Sun Y, Wong SL, et al. Hospital volume and late survival after cancer surgery. Ann Surg 2007;245:777-83. [Crossref] [PubMed]

- Schrag D, Cramer LD, Bach PB, et al. Influence of hospital procedure volume on outcomes following surgery for colon cancer. JAMA 2000;284:3028-35. [Crossref] [PubMed]

- Dimick JB, Finlayson SRG, Birkmeyer JD. Regional Availability Of High-Volume Hospitals For Major Surgery. Health Aff (Millwood) 2004;Suppl Variation:VAR45-53.

- Chimonas S, Fortier E, Li DG, et al. Facts and Fears in Public Reporting: Patients’ Information Needs and Priorities When Selecting a Hospital for Cancer Care. Medical Decision Making 2019;39:632-41. [Crossref] [PubMed]

- U.S. News & World Report. Best Hospitals for Cancer. 2018. Available online: https://health.usnews.com/best-hospitals/rankings/cancer, 2018.

- George A, Adams Z, Majumder A, et al. U.S. News & World Report 2018-2019 Best Hospitals Procedures & Conditions Ratings. 2018. Available online: https://www.usnews.com/static/documents/health/best-hospitals/BHPC_Methodology_2018-19.pdf. Accessed June 24, 2019.

- The Leapfrog Group. Compare Hospitals. 2019. Available online: http://www.leapfroggroup.org/compare-hospitals, 2018.

- California Office of Statewide Health Planning and Development. Volume of Cancer Surgeries Performed in California Hospitals. 2019. Available online: https://oshpd.ca.gov/data-and-reports/healthcare-quality/volume-cancer-surgery-reports/, 2018.

- Johns Hopkins Medicine. Surgical Volumes. 2019. Available online: https://www.hopkinsmedicine.org/patient_safety/surgical_volumes.html. Accessed June 4, 2019.

- Healthcare Cost and Utilization Project (HCUP). Overview of State Inpatient Databases (SID). 2018. Available online: https://www.hcup-us.ahrq.gov/sidoverview.jsp.

- Florida Agency for Health Care Administration. Order Data/Data Dictionary. 2017. Available online: http://www.floridahealthfinder.gov/Researchers/OrderData/order-data.aspx, 2018.

- American Hospital Association. American Hospital Association Annual Survey Database. 2012. Available online: http://www.ahadata.com/aha-annual-survey-database-asdb/.

- Healthcare Cost and Utilization Project (HCUP). Overview of the National (Nationwide) Inpatient Sample (NIS). 2018. Available online: https://www.hcup-us.ahrq.gov/nisoverview.jsp, 2018.

- Centers for Medicare & Medicaid Services. MA State/County Penetration. 2012. Available online: https://www.cms.gov/Research-Statistics-Data-and-Systems/Statistics-Trends-and-Reports/MCRAdvPartDEnrolData/MA-State-County-Penetration.html, 2018.

- Lavery JA, Lipitz-Snyderman A, Li DG, et al. Identifying Cancer-Directed Surgeries in Medicare Claims: A Validation Study Using SEER-Medicare Data. JCO Clin Cancer Inform 2019;3:1-24. [Crossref] [PubMed]

- Pronovost PJ. Blockbuster Data: How Reporting Surgical Volumes Could Save Lives. In. Vol 2019. Amstrong Institute Blog2017. Available online: https://armstronginstitute.blogs.hopkinsmedicine.org/2017/07/05/blockbuster-data-how-reporting-surgical-volumes-could-save-lives/

- Baker L, O’Sullivan M. Small Numbers Can Have Big Consequences: Many California Hospitals Perform Dangerously Low Numbers of Cancer Surgeries. 2017. Available online: https://www.chcf.org/publication/small-numbers-can-have-big-consequences-many-california-hospitals-perform-dangerously-low-numbers-of-cancer-surgeries/. Accessed June 6, 2019.

- Pennsylvania Health Care Cost Containment Council. Number of Cancer Surgeries Performed in Pennsylvania Hospitals. Cancer Surgery Volume 2019. Available online: http://www.phc4.org/reports/csvr/18/. Accessed June 6, 2019.

- Clarke CA, Asch SM, Baker L, et al. Public Reporting of Hospital-Level Cancer Surgical Volumes in California: An Opportunity to Inform Decision Making and Improve Quality. J Oncol Pract 2016;12:e944-8. [Crossref] [PubMed]

- McCullough M. Need cancer surgery in Pennsylvania? New online tool can help with the decision. The Philadelphia Inquirer2018.

- Clark C. The 'Dirty Little Secret' of Low Cancer Surgery Volumes. MedPage Today. September 22, 2016, 2016.

Cite this article as: Li DG, Lavery JA, Panageas KS, Bach PB, Lipitz-Snyderman A. Estimating hospitals’ all-payer volume of cancer surgeries from Fee-for-Service Medicare claims. J Hosp Manag Health Policy 2020;4:13.